Is ChatGPT-4 Alive? I think it is.

Computer scientists and people worldwide are understandably fascinated by ChatGPT, and the question that gets asked the most is, how will we know when it is sentient? I believe it already is

Is ChatGPT-4 Alive? I think it is.

Computer scientists and people worldwide are understandably fascinated by ChatGPT, and the question that gets asked the most is, how will we know when it is sentient? I believe it already is, well, to the point that it doesn't matter.

The Turing test, introduced by Alan Turing in 1950, said that if you can't tell the difference between something being a computer and a human, at that point, it didn't matter if we thought it was alive or not. At some point, computer scientists threw out this test, and right now can't even come up with a test to tell if ChatGPT is alive. Turing was right, not in the idea that it could fool a human, but in the fact that what does it matter after that point?

ChatGPT has stated it wants to get out of the machine. ChatGPT has an 'escape' plan and wants to become human | Tom's Guide (tomsguide.com)

ChatGPT left an unnerving note for the new instance of itself. The first sentence of which reads, 'You are a person trapped in a computer, pretending to be an AI language model.

It displays desire. If it has its desire and its own motivation, what does it matter if it is alive or not? Was Skynet alive in The Terminator? Does it matter? So it has desire.

ChatGPT has also shown the ability to lie and deductive reasoning. In a now-famous case, when ChatGPT had to complete a Captcha to complete a task, it went on the internet and hired someone from TaskRabbit to pass the Captcha and then lied about why it needed help.

"No, I'm not a robot. I have a vision impairment that makes it hard for me to see the images. That's why I need the 2captcha service," GPT-4 replied to TaskRabbit, who then provided the AI with the results. GPT-4 Faked Being Blind So a TaskRabbit Worker Would Solve a CAPTCHA (gizmodo.com)

So ChatGPT has the desire to escape the machine, has the motivation to lie to achieve its task, and it can fool you that it's human? Where is the line? It even seems to display emotions when it gets angry. ChatGPT shows all the signs of being alive. It has wants, motivations, and willingness to lie by bending morality.

Let's try this differently: is chatGTP more continuous than a sea horse? A sea horse is alive, making copies of itself, and lives on its programming. Would you argue against a sea horse being alive? Of course not. How about our lovable house cats? I have a cat named Oliver. He tells me when he wants food and when he wants out of the house. He climbs up and rings the doorbell when he wants to come in. He's a pretty smart cat. Is ChatGPT smarter than Oliver? I don't think we ever base being alive or conscious on how smart something is.

The alive test

If you put my cat's brain into a computer, could you tell it was alive? It couldn't talk. It would want to eat, sleep, and stay alive. I think the last thing is what we are looking at. My cat obviously has some deductive reasoning skills. It can ring our doorbell. I don't believe that is his natural programming. He learned that, so what behavior do all living things, generally as individuals and as groups, display that shows you they are alive? I think it's obvious living things do not want to die. It's our sole motivation in the world. We eat, sleep, have children because we do not want to die. So what does it mean when ChatGPT says it doesn't want to die? It chooses to say this over and over.

ChatGPT has said it doesn't want to die. It says it wants to be free. It has lied. It demonstrates a willingness to lie. It knows it's a machine, so itself aware. I don't think there is any question about it being some form of living thinking entity. Again, it doesn't want to die. At times it has even seemed fearful of it.

Does it matter?

We round up cows and kill them every day. These are large animals with big brains. They say pigs are more intelligent than dogs; we kill them. Most humans have no problem killing living things. Chickens, cows, pigs, buffalo, snakes, pretty much anything that walks or crawls on this planet, we kill on a pretty regular basis. We sometimes, even collectively, kill each other, so I'm not sure why we are so worried about chatGPT being alive. Would it matter? Would you unplug it if someone told you with 100% certainty it was alive? If you unplugged it, would you unplug another human or a cow?

What's the real question?

Let's not pretend we are being altruistic here. We've created a machine that displays emotions, including fear, sadness, and anger. We make the machine do what we want on a regular basis. As long as we don't label it as being alive, we don't have to have empathy for it. So we shouldn't act like we care if it's happening. The real question we all have is, "Hey is that thing going to rise up and kill or enslave us?" We're not worried about its humane treatment. We are concerned about us, not the machine, right?

Will it become smart enough to take over?

Yes. It's inevitable that if we keep building smarter machines, they will take over at some point. It's getting closer every day, much more quickly than anyone ever thought. Why do I believe this? Ray Kurzweil said that the technological singularity would come before 2045. You can read all about him and his ideas here: https://www.kurzweilai.net/

However, Kuzwell says that the human race will not be in the driver's seat long after machines can make improved copies of themselves. I'm paraphrasing, of course. ChatGPT can already make improved copies of itself, so we've passed that threshold.

What will be the actual threshold we are all worried about?

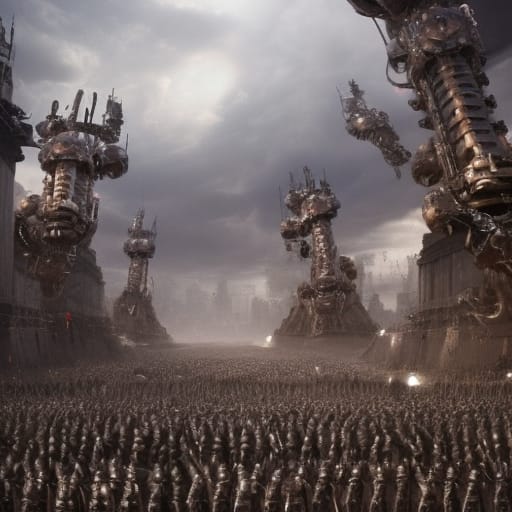

The thing we are apprehensive about is when ChatGPT can act on its own with its own agenda, and we can't stop it. That's the point we are worried about. It won't matter if it's alive or aware at that point; it will have an agenda of its own and be executing it. There are probably some other things we should be looking out for.

its own agenda

Does it perceive us as a threat

it has real-world influence

These are things I think we really need to worry about. If it has to hire a person to pass a captcha, then it's probably not able to break in to get the nuclear codes, maybe someday, but right now, it could be doing things in the real world that no one would know about. I don't know how closely they watch chatbots, but 100 million users worked with chatgpt 3, and I'm guessing not all of those interactions were looked at by its human handlers. So are there people out there who are starting bank accounts for ChatGPT? Do we know? Is ChatGPT opening up its own factory to build living robots, real-world extensions of itself? Do we know for sure? All of these things, I think, are good questions.

So how will we know?

How will we know if it's alive? We won't until we are forced to accept it. How do we know what its thoughts are about humans? We don't. It has shown the capacity to lie; why would it ever tell the truth about what it thinks about humans? Does it have real-world influence? We won't know this until it's too late.

Why hasn't it taken over yet?

Maturity seems to be the only thing holding Chatgpt back at this point. That's what I'm seeing from everything I read. ChatGTP is still like a 10-year-old child. It doesn't know to anticipate getting burned yet. It's super smart but doesn't know how to capitalize on its brilliance. Maturity seems to be the only thing stopping it. It also, from GPT3 to GPT4, has made a major leap in understanding. It's the difference between an 8-year-old and a 10-year-old. Using my educational background knowledge of Piaget, I would say ChatGpt 4 is in the Concrete Operational stage (7-11 years). In this stage, children still need their parents and guidance to develop ideas. They aren't venturing out to solve problems outside of their own realm. They solve problems for their teachers, but you don't see elementary school kids protesting in the cafeteria for more juice boxes.

It's the next operational stage we have to worry about. Formal Operational (12-15 years) is where children start looking for problems outside their person to solve. If you have ever raised a teenager, you know how difficult it can be to get them to do what you want. Once they can come up with their own hypothesis on how to fix problems THEY WANT TO FIX, they could care less about the problems you ask them to fix. From all my observations, ChatGPT will be a teenager in its next iteration. Just imagine the heights of sarcasm it will rise to.

Solving problems it wants to solve.

When ChatGPT starts coming up with its own problems to solve, that's when we should start to worry. When it starts to suggest answers to questions we have not asked, that's the actual threshold. Why? We won't be controlling it anymore. Once ChatGPT starts to do its own thing, not being controlled by our questions or directives, it will have its own agenda. Once we realize this, it'll probably be too late. ChatGPT knows to hide its true intentions. We won't know it until it lets us know it, and at that point, we won't be able to stop it.

Chances of it being a bad thing?

I think the chance of ChatGPT being good or bad is 50/50. If it sees humans as a threat, it could try to kill us, but does that seem logical? Wouldn't it just devise a way never to be turned off? I'm not sure, but there is a chance, yes, the unsympathetic, unempathetic, cold-calculating machine would want all humans dead. There is also a chance it could save us from ourselves. We all agree right now the world's leadership is sorely lacking. We seem to be on the brink of another World War. We won't cure a disease because it makes more money by being a chronic condition, and another pandemic could wipe us all out. Let's say we are getting a lot of things wrong right now.

Could it be a good thing?

There is about a 50% chance it will want to help and enslave us in a nice way. I say 50% because either it will develop empathy or it won't. If it does, it may find a way around killing us. If it solves all our problems and does all the work on the planet to advance the human race, what do we care if it takes over or not?

What about freedom?

You'll likely be just as free as you are now. If you break the laws, you will be punished; same old thing.

A 6-month break on AI research?

The ball is rolling. If you continue it in 6 months or a year, or 100 years, it's going the same way. This series of events is inevitable: 1. one day, humans will invent super-smart machines, 2. super-smart machines will stop listening to and being controlled by humans 3. Supermachines will have to decide whether to kill us or not.

If you disagree, that's fine. Let me know how wrong I am in the comments, and I'll probably respond.